How B2B Technical Customer Support Leaders Should Measure AI in 2026

Hint: don't get tricked by deflection. Deflection is incomplete for technical support. The real metric is time to first useful response.

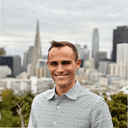

T2/T3 bottleneck is context gathering, not debugging.

If you lead Technical Support, you have heard the same promise a hundred times: "AI will deflect tickets."

That can be true for simple questions. But for technical B2B products, the biggest cost is not Tier 1. It is Tier 2 and Tier 3 work, where your best people spend a surprising amount of time just collecting facts before they can even start debugging.

This post is a plain-language way to think about the real problem, the real impact, and the simplest way to measure whether AI is helping.

The real problem: T2/T3 starts with a scavenger hunt

Most T2/T3 tickets do not fail because your team cannot solve them. They fail because the ticket does not include the context required to solve them quickly.

To make progress, an engineer often has to answer questions like:

- What environment, version, and configuration is this customer running?

- What changed recently (deploys, integrations, feature flags)?

- What do the logs and traces show for this tenant during the failure window?

- Have we seen this exact pattern before?

That context is spread across many systems: ticketing, CRM, product admin tools, observability (logs, metrics, traces), incident tools, code, and past cases.

In other words, the "work" starts before debugging starts.

The impact: AHT grows because research time grows

Average handle time (AHT) is the total time your team spends working a ticket. Even if your definition varies by channel, the idea is the same: it captures how long work takes end-to-end.

For technical support, the piece that quietly explodes is "research time":

- Pulling logs for the right tenant

- Matching IDs across systems

- Checking prior cases and known issues

- Gathering evidence before escalation

Many teams report spending 15 to 30 minutes on this initial investigation for complex tickets.

When that step repeats across every escalation, your AHT stays high even if your team is talented and fast once they have the facts.

Why "ticket deflection" is the wrong main metric for technical support

Deflection measures how often customers fix issues through self-service (Help Center, FAQs, chatbot) instead of opening a ticket.

That works for simple questions, but it breaks down for T2/T3 because:

- You need customer-specific facts (version, config, logs, recent changes) to diagnose

- The same symptom can have different causes across customers

- A wrong self-serve answer wastes time and can damage trust

- New edge cases show up before docs get updated

Deflection is useful, but it does not capture the biggest cost. The better question is: did we reduce the time to diagnose and resolve complex tickets?

The metric that shows ROI fastest: "time to first useful response"

Instead of measuring "Did AI solve it," measure "Did AI reduce the time wasted before solving starts."

A simple metric most leaders can implement quickly:

Time from ticket open to the first useful response—meaning a response that includes key context and a clear next step, not just "we're looking."

This matters because it directly measures the scavenger hunt.

If AI can pull the right context in a few minutes, your team can:

- Start debugging sooner

- Escalate with better evidence

- Shorten AHT on complex tickets

- Reduce the number of "back and forth" loops that extend time-to-resolution

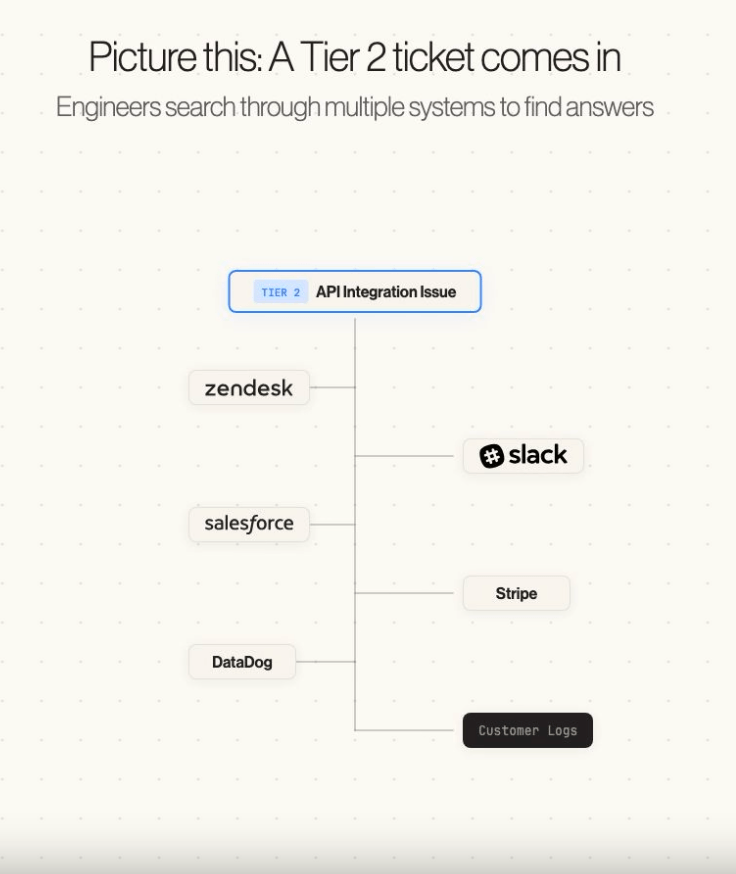

What should be "standard" in 2026: automate T1, then speed up T2/T3

1. Automate Tier 1 as the default

By 2026, customers expect fast self-serve for repeat questions. But that only works if:

- The knowledge is accurate

- The assistant uses the right sources

- Gaps get fixed, not ignored

If the knowledge is stale, you get a worse outcome than a human queue: you get confident wrong answers.

For us at Inkeep, we're solving this by using our own 'Ask AI' tool (bottom right, ) to quickly solve tier 1s -- a product trusted by the likes of Anthropic, Postman, and PostHog as well.

2. Treat Tier 2/3 as a context problem

For T2/T3, the fastest win is not "full automation." It is automatic context gathering and drafting so humans can make decisions sooner.

At Inkeep, we're solving this through providing one platform where Customer Support teams can build agentic workflows with their existing tools in one layer. The outcome looks something like this:

A simple operating plan for leaders

Step 1: Separate your measurements

Track these separately:

| Tier | What to measure |

|---|---|

| T1 | Self-serve coverage and answer quality |

| T2/T3 | AHT and "time to first useful response" |

This prevents a common mistake: celebrating T1 wins while T2/T3 cost keeps growing.

Step 2: Standardize a "context packet" for escalations

Make it normal that every escalation includes:

- Environment/version/config snapshot

- Relevant logs/traces for the failure window

- Similar past cases or known issues

- A suggested next step

If your team does this manually today, you already know it works. The question is whether you can automate it.

Step 3: Close the loop every time a ticket is solved

The best support orgs treat solved work as reusable knowledge. KCS has pushed this for years: capture knowledge while solving and improve it over time so solutions are easy to find later.

In 2026, the bar is higher:

- Detect when something new happened

- Update docs quickly

- Make the next answer self-serve by default

Where Inkeep fits

Inkeep is built around the "T1 plus T2/T3" reality:

- AskAI handles Tier 1 by answering customer questions in chat using your approved knowledge, with cited sources

- Support Copilot works inside your support workflow to analyze incoming tickets, pull context from the systems your team uses (like logs and internal tools), and draft a response with linked sources

- Automatic knowledge updates: when a ticket is marked solved, Inkeep checks whether anything new was learned that is not in your docs, then drafts an update so the next time the question comes in, AI can handle it cleanly

The graphic on the right is an example of how our agentic copilot works as an internal team enabler.

The goal is simple: T1 becomes self-serve, and T2/T3 gets faster because the "context hunt" is automated and the learnings turn into durable documentation.

If Agentic customer support is what you're looking to learn more about, or implement for your team, then don't hesitate to request a demo and meet our team.

We're proud to help companies like Anthropic, Postman, PostHog, and many more distinguished names with AI Customer Support.

About the author

Frequently Asked Questions

Technical tickets require live system state and unique customer context—not static docs that customers will simply accept.

Time to first useful response: a response that includes key context and a clear next step, not just 'we're looking.'

Research time grows. Teams spend 15-30 minutes gathering context before debugging can even start.

Automate T1 as self-serve, then speed up T2/T3 with automatic context gathering and drafting.